Buyer–Seller-Deception-Game Dataset: A new comprehensive dataset for facial expression based deception detection in economic contexts

The paper introduces Layout 1 of our Buyer–Seller-Deception-Game Dataset and also analyses different non-verbal cues as base for automatic deception detection.

Buyer–Seller-Deception-Game Dataset: A new comprehensive dataset for facial expression based deception detection in economic contexts

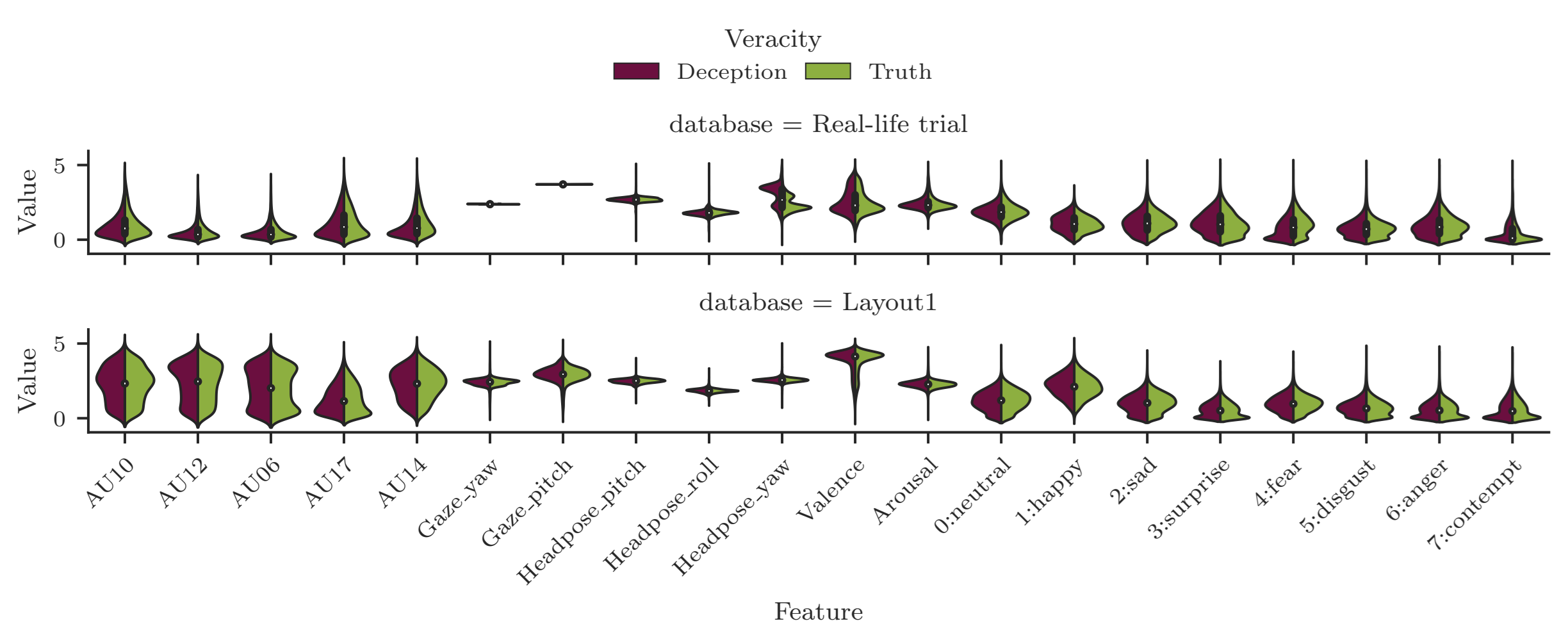

Automated deception detection from video and audio is still a challenging task. Deceptive behavior depends heavily on context, culture, and stakes. To explore this in online interactions like virtual sales meetings, we created a new high-quality, low-stakes dataset. The current release includes 500 annotated videos (Layout 1), with an ongoing extension to around 1000 videos when Layout 2 is added. Participants play incentivized online games where sellers try to persuade buyers, generating naturally motivated deceptive and truthful behavior. We tested various visual and audio features – gaze, head pose, facial Action-Units, and prosody – on our dataset and a more challenging high-stakes dataset. Results show that OpenFace AU features work best on clean recordings, while CNN-based AU predictors handle harder, lower-quality videos better. Multimodal approaches slightly outperform single-feature methods. The dataset will be freely available to support future research in automated deception detection.

Citing

@article{DINGES2026200636,

title = {Buyer–Seller-Deception-Game Dataset: A new comprehensive dataset for facial expression based deception detection in economic contexts},

journal = {Intelligent Systems with Applications},

volume = {29},

pages = {200636},

year = {2026},

issn = {2667-3053},

doi = {https://doi.org/10.1016/j.iswa.2026.200636},

url = {https://www.sciencedirect.com/science/article/pii/S2667305326000116},

author = {Laslo Dinges and Marc-André Fiedler and Ayoub Al-Hamadi and Dmitri Bershadskyy and Joachim Weimann},

keywords = {New deception detection dataset, Facial action-units, EmotioNet, Audio, Experimental economics}