AI-based bi-modal fusion system for automated clinical pain monitoring

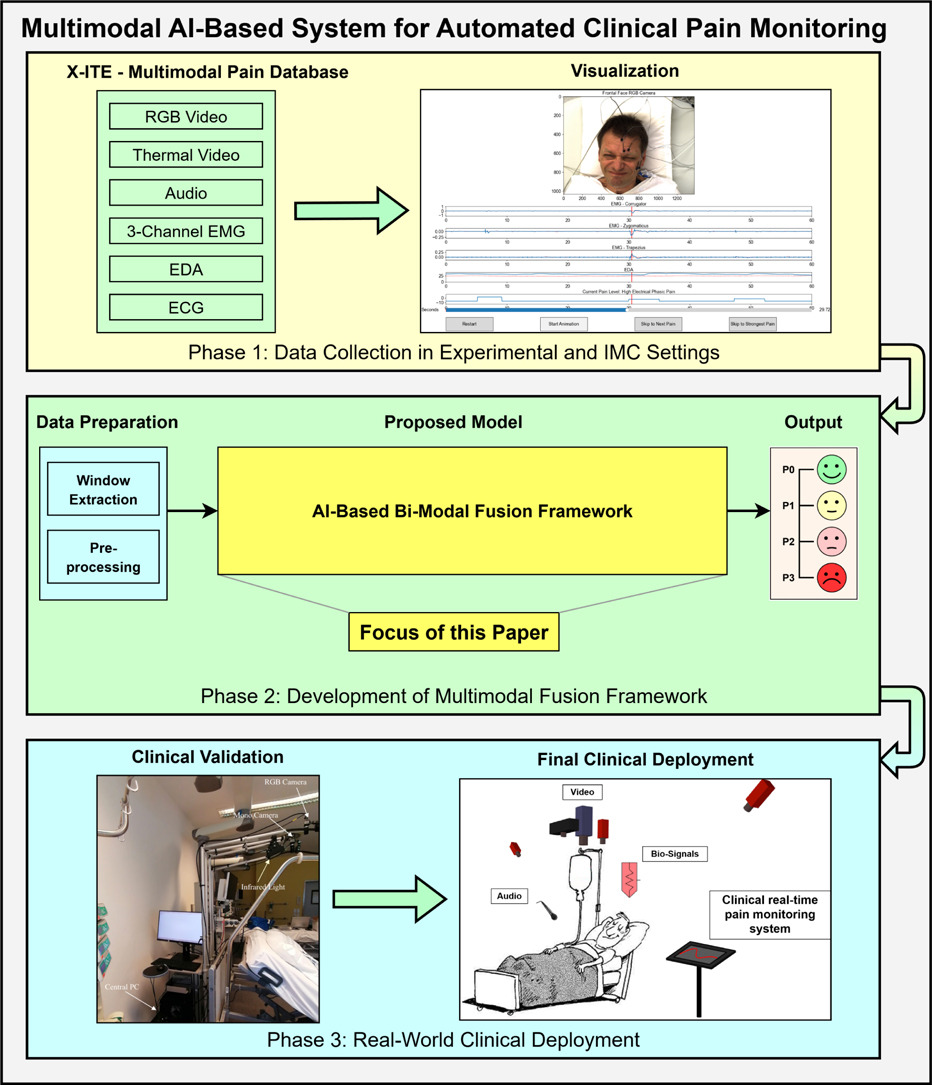

We present a privacy-preserving bi-modal framework for pain intensity classification that fuses EDA and EMG signals using an LSTM network with spatial attention and adaptive weighting, outperforming state-of-the-art methods with improved accuracy, robustness, and computational efficiency across multiple sub-datasets.

AI-based bi-modal fusion system for automated clinical pain monitoring

Pain is a highly subjective and multidimensional experience involving both physiological and psychological

factors. Developing accurate and robust pain monitoring systems is essential for effective assessment and

rehabilitation management. Recently, multimodal fusion combining physiological and behavioral signals has

gained attention for capturing complex pain responses. Unlike video and audio modalities that may raise

privacy concerns, physiological signals such as electrodermal activity (EDA) and electromyography (EMG) offer

a privacy-preserving alternative. In this study, we propose a bi-modal fusion framework based on EDA and EMG

signals for pain intensity classification. The framework integrates a Long Short-Term Memory (LSTM) network

with a spatial attention mechanism to capture both local and global temporal dependencies, and incorporates

an adaptive weighting module to enhance inter-modal feature interaction. To address the class imbalance

issue, we introduce focal loss as the loss function and apply a data-level jump reduction strategy. Extensive

experiments on 11 sub-datasets from the X-ITE pain database demonstrate that our method outperforms

state-of-the-art (SOTA) approaches, achieving an average accuracy improvement of 3.88% and a 7.12% gain

on the R-ETD sub-dataset. The model also exhibits more reliable performance on minority and challenging

classes, highlighting its robustness across modalities and sub-datasets. Moreover, the proposed method offers

improved computational efficiency and reduced resource consumption. These results suggest that the proposed

framework is a promising solution for multimodal pain monitoring with strong potential for real-world clinical

applications.

Fulltext Access

https://doi.org/10.1016/j.compbiomed.2025.111260

Citing

@inproceedings{WangBiModalPain2025,

title = {AI-based bi-modal fusion system for automated clinical pain monitoring},

author = {Wang, Huibin and Nienaber, Sören and Dinges, Laslo and Al-Hamadi, Ayoub},

booktitle = {Computers in Biology and Medicine},

year = {2025}

}