Constructing a multimodal feature set for pain intensity classification

We present a multimodal feature set that integrates heterogeneous biosignal features from the BioVid and X-ITE databases, demonstrating that combining complementary modalities with balanced information distribution improves pain intensity classification accuracy by up to 8% while maintaining robustness when individual modalities are unavailable.

AI-based bi-modal fusion system for automated clinical pain monitoring

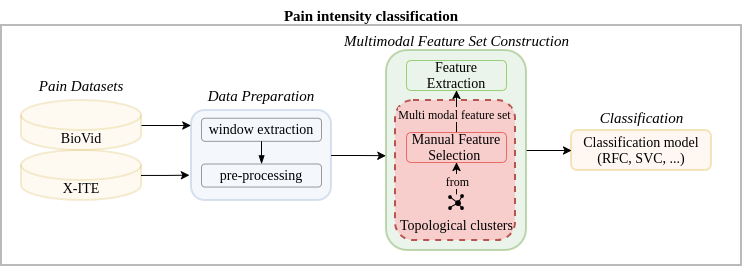

Previous approaches to pain intensity classification have typically relied on small sets of top-performing features to maximize accuracy. While effective in constrained scenarios, such strategies neglect the diverse range of modalities available in modern pain databases. In this work, we introduce a multimodal feature set (MMFS) that integrates heterogeneous features from each biosignal modality in the BioVid and X-ITE databases. Our approach captures a broad spectrum of complementary information, maintaining robustness even when individual modalities are unavailable. Experimental results show consistent performance improvements, with classification accuracy increasing by up to 8% overall and by 6% when evaluating all pain levels. Through detailed analyses of individual modalities, confusion matrices, and a modality ablation study, we demonstrate that the combined effect of multimodality and balanced information distribution drives these gains. Furthermore, feature importance analysis reveals which inputs contribute most to final predictions and which features are most beneficial for the more challenging pain classes.

Fulltext Access

https://doi.org/10.1007/s00521-026-11962-y

Citing

@inproceedings{NienaberMMFS2026,

title = {Constructing a multimodal feature set for pain intensity classification},

author = {Nienaber, Sören and Wang, Huibin and Dinges, Laslo and Al-Hamadi, Ayoub},

booktitle = {Neural Computing and Applications},

year = {2026}

}