Deep Learning-Based Gaze Estimation: A Review

A comprehensive review of gaze estimation methodologies, tracing the evolution from conventional model- and feature-based approaches to modern deep learning techniques, including CNNs, transformers, temporal models, and lightweight networks, alongside an analysis of open challenges and future directions.

Deep Learning-Based Gaze Estimation: A Review

Gaze estimation is a critical facet of understanding user intent and enhancing human–computer interaction, with applications spanning human–robot interaction, driver monitoring, virtual and augmented reality, and engagement analysis. The integration of deep learning has substantially advanced the field, yet unique challenges remain in adapting and optimizing models for precise, unconstrained gaze tracking across diverse environments and subjects.

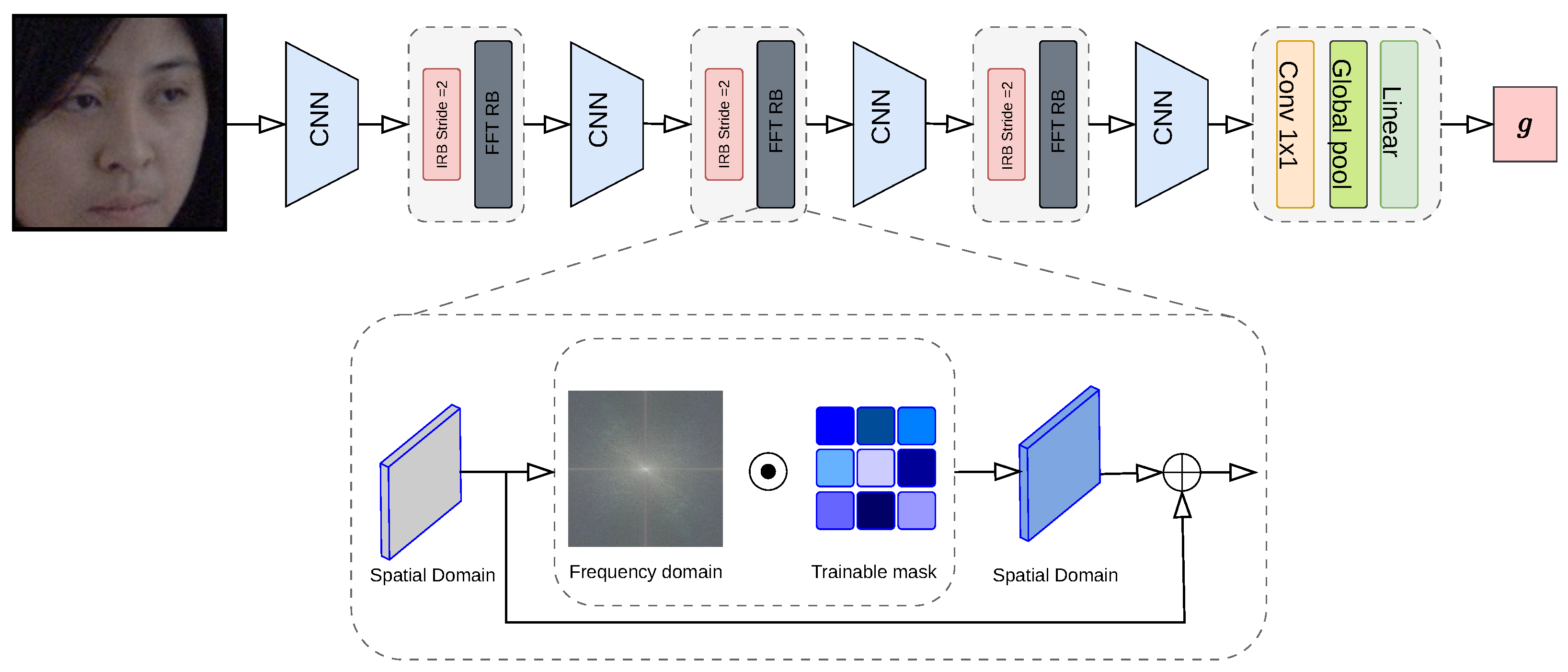

This paper presents a thorough review of recent developments in deep learning-based gaze estimation, tracing the evolution from traditional model-based and feature-based methods to sophisticated appearance-based deep learning techniques. We examine the key components of successful gaze estimation systems — input feature processing, neural network architectures, and data preprocessing — and provide a comprehensive comparison of existing methods, shedding light on their effectiveness and limitations within various implementation contexts.

Conventional gaze estimation methods are organized into three categories: model-based approaches, which utilize 3D geometric models of the eyeball; feature-based approaches, which identify key eye landmarks and map them to gaze directions via regression; and appearance-based approaches, which map raw image pixels directly to a gaze vector. While effective under constrained conditions, these methods struggle with variations in lighting, head pose, and camera setups.

Deep learning transformed the field from 2015 onward by overcoming many of these limitations through large-scale data-driven training. The review covers major deep learning paradigms applied to gaze estimation, including CNN-based architectures, temporal models for capturing dynamic gaze behavior, generative frameworks, transformer-based approaches, and lightweight networks suitable for mobile and embedded deployment. Challenges specific to cross-dataset generalization are also addressed, covering domain adaptation and domain generalization strategies that enable models to transfer across different subjects and environments without requiring target-domain labels.

Key Contributions

- Structured taxonomy: A clear categorization of gaze estimation methods from conventional to modern deep learning approaches

- Deep learning landscape: Coverage of CNNs, transformers, temporal models, generative frameworks, and lightweight architectures

- Generalization analysis: Review of domain adaptation and domain generalization techniques for cross-dataset robustness

- Open challenges: Identification of key obstacles — cross-person generalization, data dependency, and efficient real-time deployment — and promising future directions including multimodal integration and self-supervised learning

Fulltext Access

https://doi.org/10.3390/robotics15040069

Citation (BibTeX)

@article{abdelrahman2026deep,

author = {Ahmed A. Abdelrahman and Basheer Al-Tawil and Ayoub Al-Hamadi},

title = {Deep Learning-Based Gaze Estimation: A Review},

journal = {MDPI Robotics},

volume = {15},

number = {4},

pages = {69},

year = {2026},

month = mar,

doi = {10.3390/robotics15040069},

url = {https://doi.org/10.3390/robotics15040069}

}